The recent conference, “Assessing the Security Implications of Genome Editing Technology,” held in Hanover, Germany under the auspices of the Volkswagen Foundation and the US National Academies of Science, Engineering, and Medicine, attempted to wrestle with the question of what security challenges posed by gene editing in humans, animals, and microbes. In the early stages of the conference, David Relman invited the audience to consider broadly and imaginatively what might count as a security threat from gene editing. Yet by the end of the three days of deliberation, a key question remained: what counts as a security threat?

I want to propose, here, that we take an ambitious view of security threats with an eye to prompting imagination about whose well-being we are concerned with securing, and against what.

To begin, let’s paraphrase a claim made by Sir Venki Ramakrishnan, of the UK Royal Society, who in his keynote noted three potential applications of gene editing technology of varying degrees:

- Treating inheritable diseases via the alteration of genomic sequences that in some cases cause, or in other cases are associated with disease

- Cosmetic changes to individuals

- So-called “human enhancement” of individuals or communities above and beyond our statistically normal function

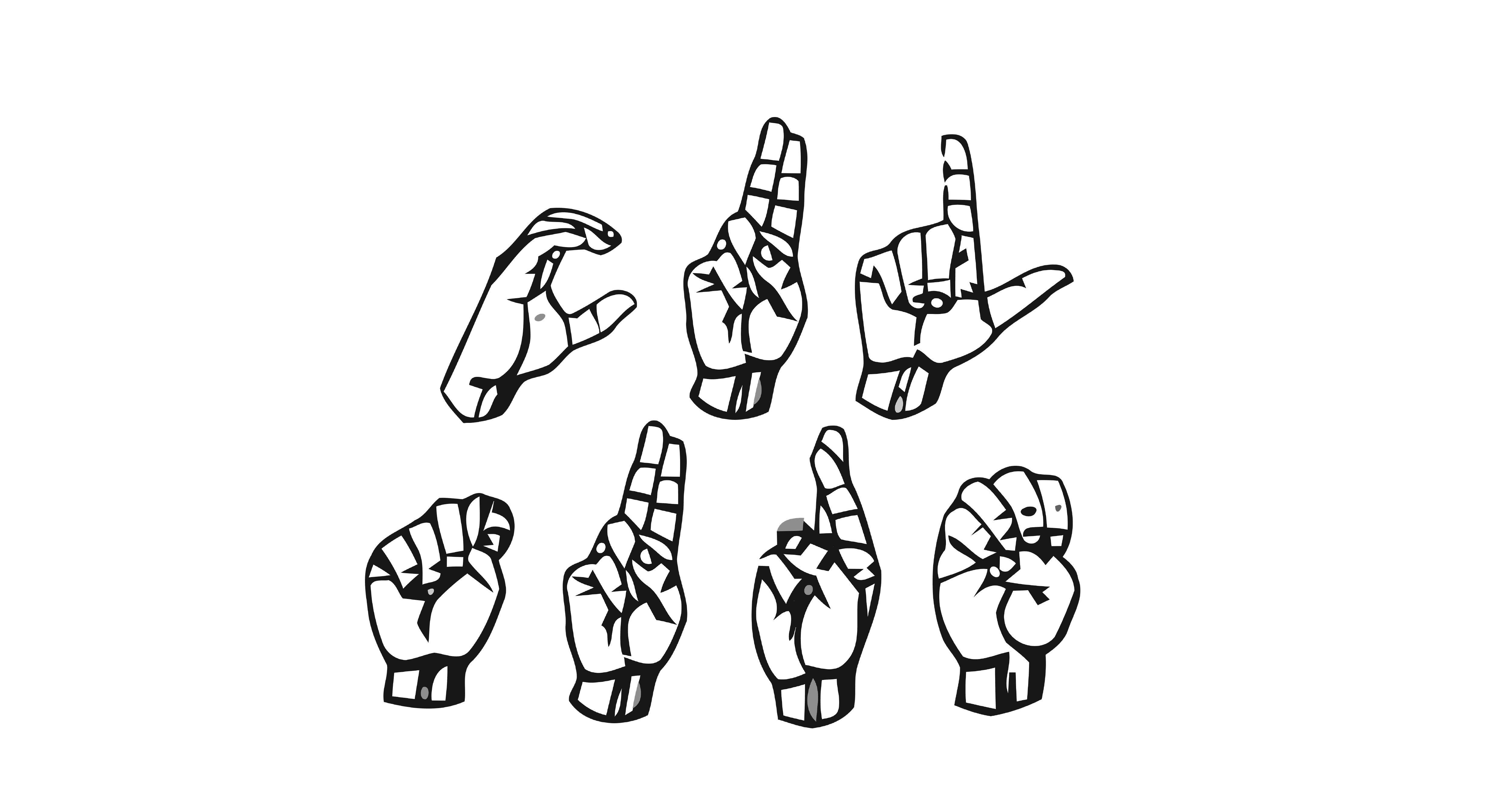

There’s an important omission to this list, however. It is unlikely that members of the signing Deaf communities around the world would consider the use of gene editing technology to be any of these applications described above. Certainly, removing genes that cause or are associated with congenital deafness is not, to the Deaf community, treating “disease.” Moreover, these changes would not be simply cosmetic: they would remove, effectively, a person from their community. This concern has been visited in debates about the ethics of the cochlear implant, but germline editing could permanently erase deafness in a profound way.

Is this a security threat? I want to say “that depends,” and what it depends on is what we mean by security.

Let’s start with the threat. Typically, when we talk about threats from deliberate misuse of the life sciences, we think in upwards of tens of thousands of people affected by an attack (although the most recent bioweapon attack in the US killed five and infected twenty-two). The Deaf community satisfies this—even within America, there are thousands of deaf individuals.

A critic might reply that we aren’t killing Deaf folk, so the threat isn’t the same. This fails for two reasons. First, we aren’t only concerned with death. Presumably, a bioweapon that had nonlethal effects would still be a bioweapon. But threats can also harm communities: this is at the core of our understanding of threats. An army that moves on your territory is a threat even if they don’t fire a shot. Bloodless coups are still coups.

For the Deaf community, a genetic treatment that could proactively erase deafness could absolutely be a seen as a threat to community of the kind that makes it a security risk. There’s a risk here that a genetic treatment could erase a culture: Deafness is not a mere community, but a culture with its own language, grammar, and knowledge. The erasure of a culture is a unique kind of threat: genocide. Historically, communities have been victims of genocide through genetic means, for example, by being “bred out.” Gene editing in humans, especially at the germline level where heritable changes are made in the human gene pool, could to some constitute a form of genocide.

A reply would be that no nation possesses the intention to enforce such an act. This fails on three counts. The first is that not all threats rely on intent: the risk of a biological accident is today considered a matter of “health security.” Second, genocide has been historically accomplished from within nations without (at least, initially) the support of nations or other official groups. Even if a nation doesn’t possess the intent, individual citizens may.

Finally, and most importantly, when we structure governance against threats we don’t just think about intent in the now. Right now, the taboo against biological weapons is strong, yet we still work to enforce that norm. Norms against radical forms of ethnonationalism and fascist ideologies—historically connected with conceptions of humanity that encourage genocide—were thought to be strong, but have weakened in recent years. When we secure against threats, we typically don’t just secure against threats that exist right now, but against those that could plausibly arise in the future.

So is gene editing a security threat to the Deaf community? On a reasonable interpretation of what security threats look like, we could say “yes,” though the Deaf community themselves may have to be consulted on the final analysis. Gene editing technology is dual-use: the same technology, like other reproductive technologies, could be used to ensure the future of the Deaf community by allowing Deaf parents to have Deaf children. This dual-use capacity, centered on a particular cultural group, demonstrates further why the Deaf community—and also disability community writ large—ought to hold a seat at the table in meetings like the one in Hanover.

This vignette demonstrates that our security concerns are neither self-evident nor straightforward. Much of our analysis depends on how we define security, but this didn’t get sufficient play in Hanover in October. This definition will inform how we think about the problem in front of us, and who needs to be in the room to consider that problem. Without a well-considered understanding of what we’re talking about, we can’t make progress and risk leaving behind those most vulnerable to the misuse of biotechnology.

(Thanks to Kelly Hills for assisting in refining my thoughts on this issue; remaining errors are the author’s alone.)